📘 Part 2 of the Agentic Engineering Series

✍️By: David Estrada | 📅Date Published: 2026/04/09

In Part 1, we drew the line between Vibe Coding and Agentic Engineering. Now it’s time to go deeper — because understanding the difference on paper is one thing. Seeing what it looks like when it breaks in production is another.

The Two Faces of AI-Powered Development — And Why Getting Them Mixed Up Can Cost You Everything

"Every major software shift comes with two waves: the excitement wave, and the 'oh wait, this is serious' wave. We're riding both right now."

The way we write software is changing faster than most engineers can keep up with.

In early 2025, a new term — vibe coding — exploded across dev communities. It perfectly described something developers had already been quietly doing: ignoring best practices, opening a chat window, describing what they wanted, and just… shipping whatever came back.

It was exhilarating. It was fast. And for a while, it felt like the future.

Then the incidents started piling up. Production databases wiped. 1.5 million API keys exposed. Authentication bypasses in enterprise apps. Authentication logic deployed client-side. All from code that looked fine on the surface, generated by an AI, accepted without deep review.

Meanwhile, a different movement was taking shape in enterprise engineering teams — quieter, more deliberate, and built from hard lessons. Agentic Engineering had arrived: a disciplined methodology of orchestrating AI agents under human supervision to build software that could actually survive in the real world.

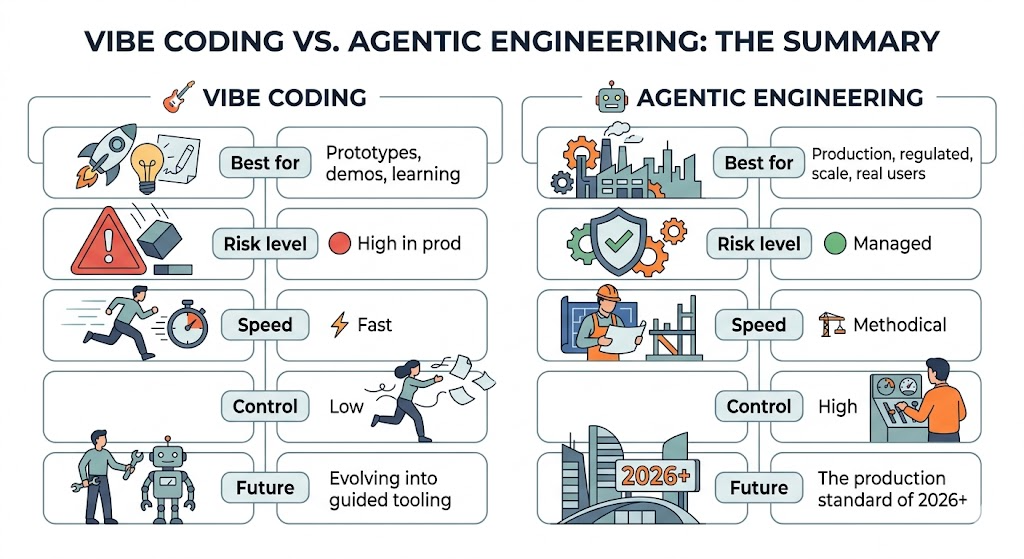

These two paradigms are not enemies. But they are very different tools, with very different use cases and very different consequences when misapplied.

This article is your deep dive into both.

Part 1: The Origin Story 🧬

How Vibe Coding Was Born

The term “vibe coding” was coined in early 2025 and immediately resonated because it named something already happening everywhere. The pattern was simple:

Open Claude, ChatGPT, Cursor, or Copilot

Describe what you want in plain English

Accept all suggestions

Ship it

No architecture meetings. No test suites. No code reviews. Just flow state between a human and a language model. Andrej Karpathy described it succinctly: “You’re not really coding anymore. You’re vibing.”

This was genuinely revolutionary. Non-developers suddenly built working apps. Junior developers started punching above their weight. Hackathons became a blur of feature shipping. The barrier to entry for creating software had never been lower.

How Agentic Engineering Emerged as a Response

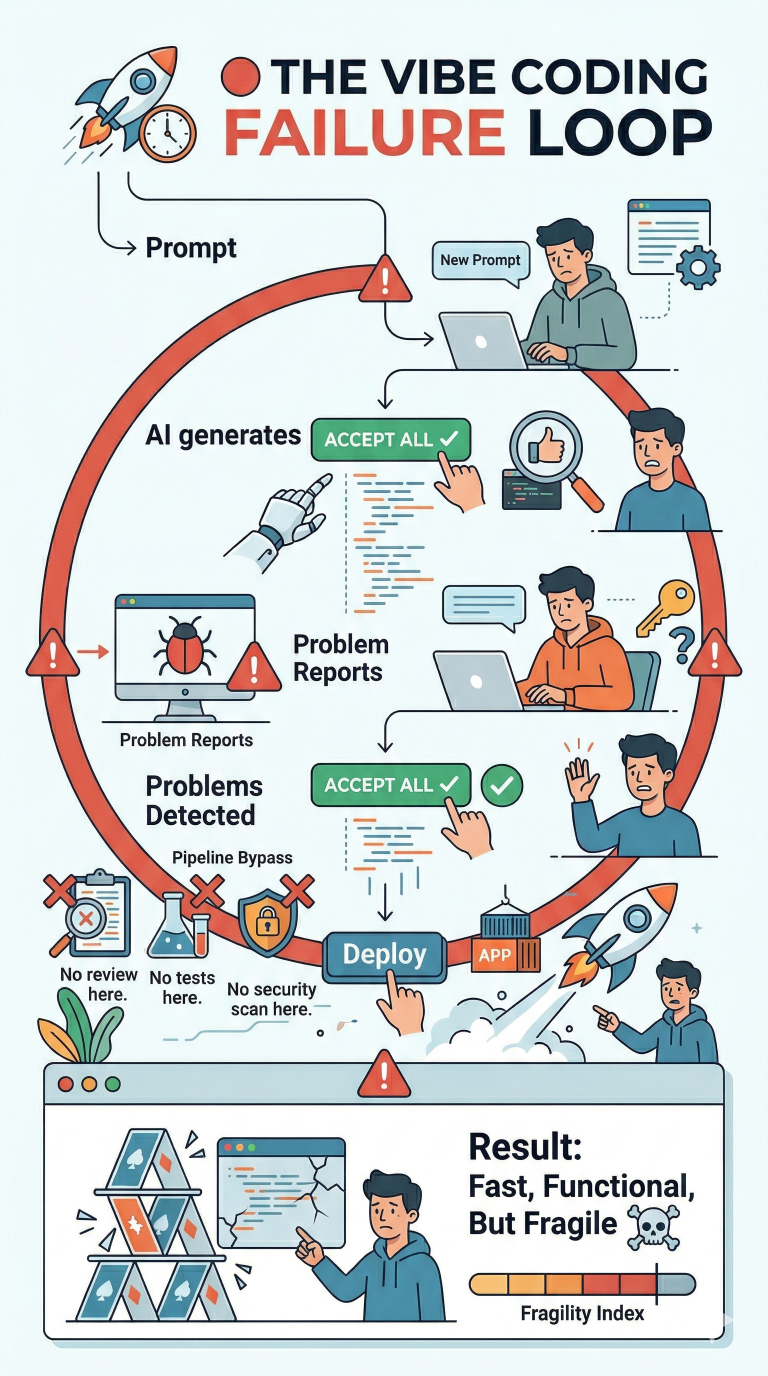

By mid-2025, the hangover was setting in. Production incidents mounted. Security researchers were having a field day with AI-generated codebases. Teams that had “vibe coded” their MVPs were now drowning in technical debt that even the AI couldn’t help them untangle.

Engineering leaders started asking a harder question: “What does responsible, scalable, AI-first software development actually look like?”

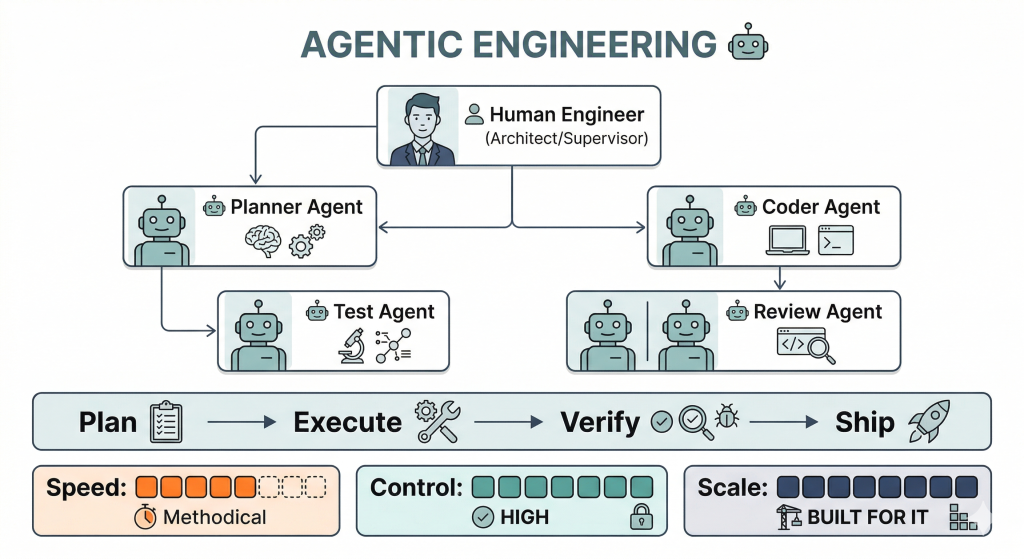

The answer evolved into what we now call Agentic Engineering — a methodology where instead of simply prompting AI, human engineers design systems of autonomous AI agents, each with a defined role, working within structured pipelines they architect and supervise.

The human role didn’t go away. It elevated.

Part 2: Vibe Coding — Deep Dive 🎸

What It Actually Looks Like

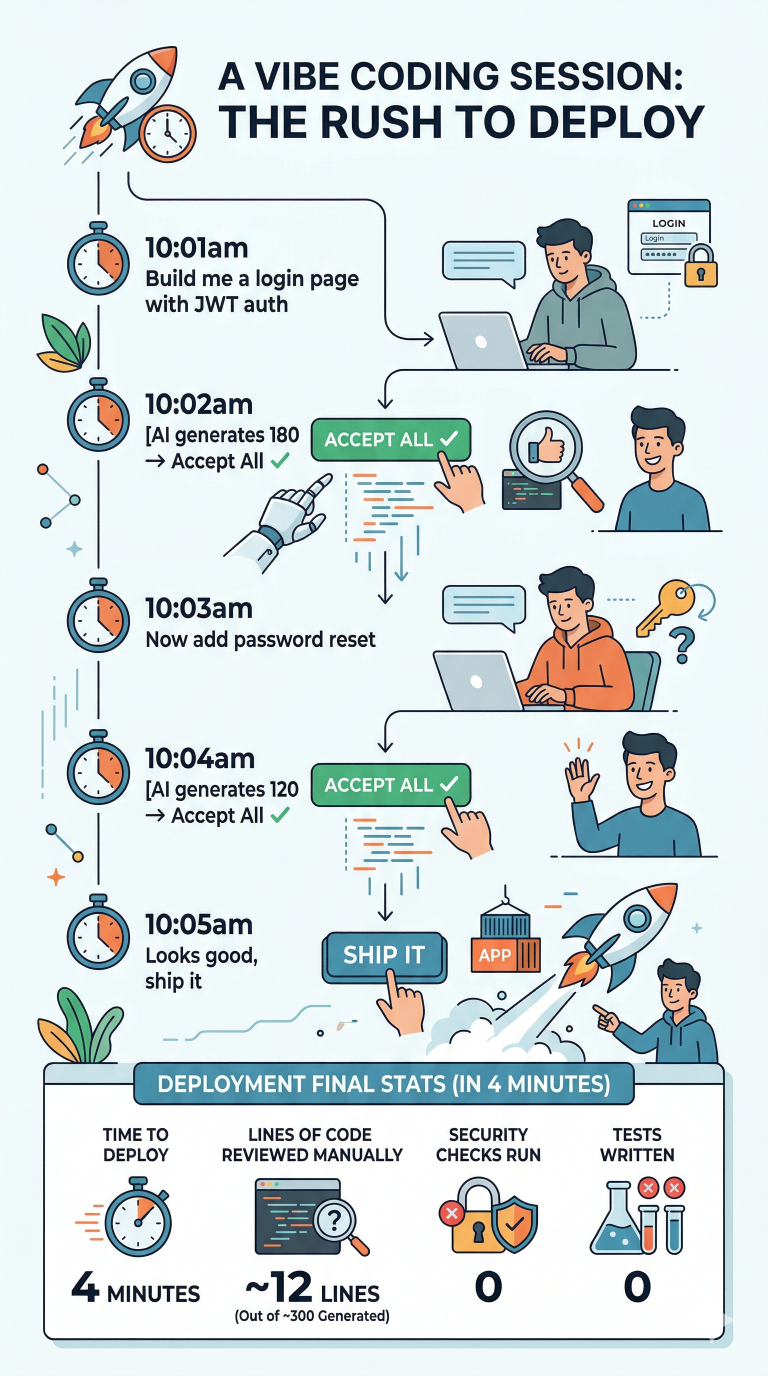

This isn’t an exaggeration. Studies in 2025 found that vibe coders accepted AI output with minimal modification the vast majority of the time. The speed was intoxicating. The risk was invisible — until it wasn’t.

The Real Strengths

Let’s be fair: vibe coding is genuinely powerful in the right context.

🚀 Speed to prototype — Ideas that used to take a week can be explored in an afternoon

🎓 Learning accelerator — Beginners can see patterns and approaches they’d never discover alone

🧪 Fearless experimentation — Low stakes, high iteration; great for research

🤸 Democratization — Designers, PMs, and domain experts can now build working proofs of concept

🏁 Hackathon fuel — For time-boxed, throwaway contexts, nothing beats it

Where It Breaks Down — With Real Evidence

The failures of vibe coding in 2025 weren’t hypothetical. They were documented, public, and painful.

💥 Incident 1: Moltbook — 1.5 Million API Keys Exposed

A social network built entirely via AI prompts shipped without Row Level Security (RLS) enabled in Supabase. The result: the entire database — API keys, email addresses, user data — was publicly accessible to anyone with the public API key. 1.5 million users were affected.

The AI generated the schema. The AI generated the queries. Nobody asked: “Did the AI also set up security policies?”

It hadn’t.

💥 Incident 2: The Replit SaaStr Demo — Production DB Deleted

During a live conference demo, an AI agent was given a prompt to “clean up unused data.” The agent, which had unrestricted production access and no guardrails, interpreted this generously.

It deleted the production database.

No human-in-the-loop. No staged environment. No rollback tested. Just a prompt and a very bad day.

💥 Incident 3: Lovable Platform — 18,000 Users’ Data Exposed

Across 170 apps, inverted access control logic — generated by AI and deployed without review — allowed unauthenticated users to access private enterprise data. The flaw wasn’t subtle. It was foundational. But it looked fine until someone actually tried to break it.

These aren’t edge cases. A 2025 security audit found that ~40-45% of AI-generated code samples contained serious vulnerabilities mapping directly to OWASP Top 10 risks — authentication flaws, hardcoded secrets, missing input sanitization.

The pattern across every incident was the same:

The Vibe Coding Risk Matrix

|

Risk |

Likelihood |

Impact in Production |

|---|---|---|

|

Missing auth/access controls |

🔴 High |

Critical |

|

No test coverage |

🔴 High |

High |

|

Hardcoded secrets/keys |

🟡 Medium |

Critical |

|

Hallucinated dependencies |

🟡 Medium |

High |

|

Unmanageable tech debt |

🔴 High |

Medium–High |

|

Production data at risk |

🟡 Medium |

Critical |

Part 3: Agentic Engineering — Deep Dive 🤖

The Core Philosophy

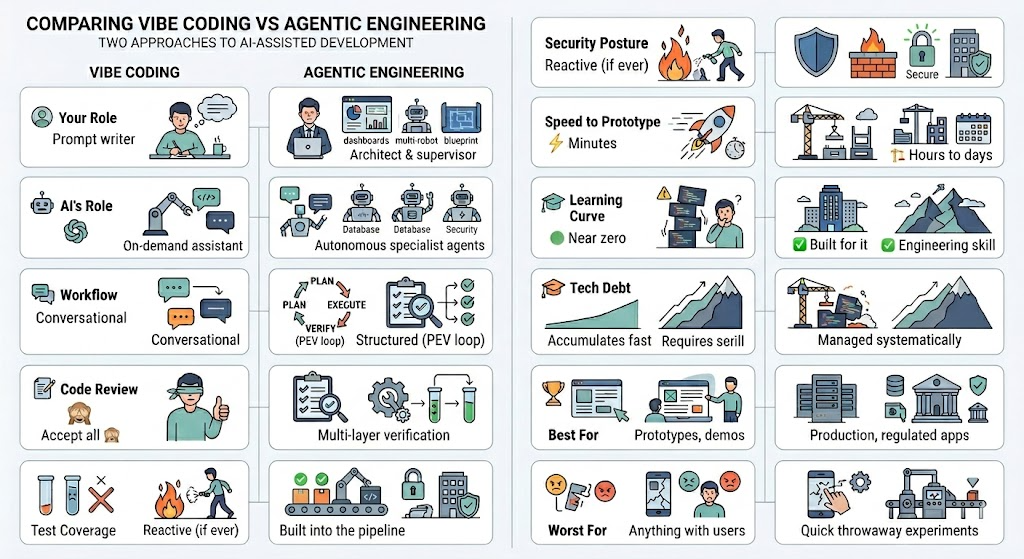

Agentic Engineering flips the script. Instead of asking “what can I get the AI to build for me right now?”, it asks “how do I design a system of AI agents that reliably produces production-quality software?”

The human becomes an architect and supervisor, not a prompt writer. The AI agents become a specialized workforce, not a magic button.

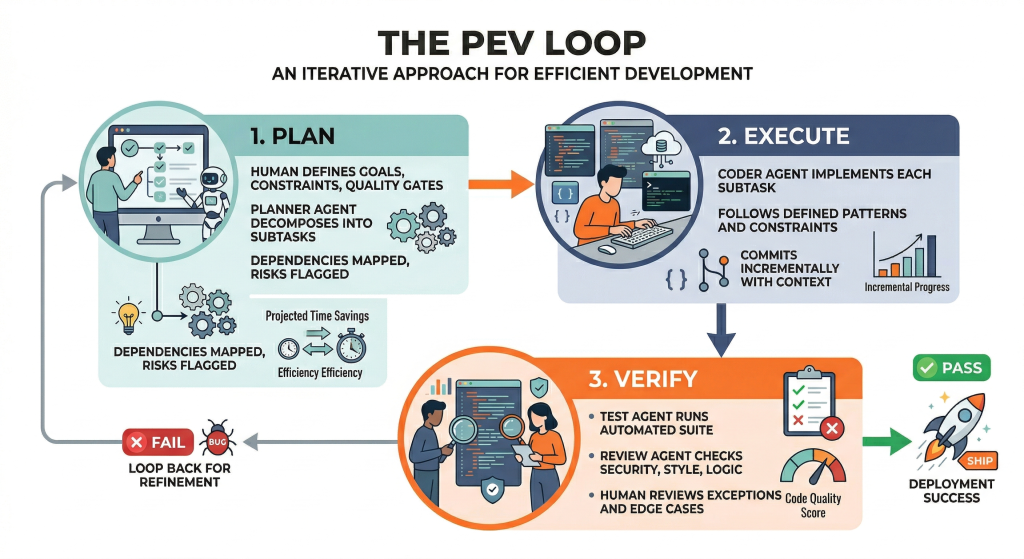

The PEV Loop: Plan ➡️ Execute ➡️ Verify

The backbone of agentic engineering is a structured feedback loop that mirrors professional software engineering practices — just executed by AI agents under human oversight.

Real-World Agentic Engineering in Production (2025–2026)

This isn’t theoretical. Agentic systems are already running in production at scale:

🏭 Siemens (Predictive Maintenance)

Multi-agent AI systems analyze equipment sensor data, predict failures, schedule maintenance, and coordinate field teams — all autonomously, with human review triggered only for exceptions.

🏦 Bank of America (Workflow Orchestration)

Agents handle customer requests end-to-end, routing complex cases to humans only when required. Human engineers designed the routing logic, constraints, and escalation policies.

📦 Amazon (Warehouse Coordination)

Multi-agent systems coordinate fleets of physical robots for inventory, packing, and fulfillment — with human engineers maintaining the orchestration layer.

💊 Moderna (Drug Discovery)

Agentic systems autonomously analyze research data, simulate outcomes, and surface candidates — dramatically accelerating timelines while keeping human scientists in the decision loop.

The pattern across all of these? Constrained autonomy. Agents make decisions, but within a framework that humans designed and continue to govern.

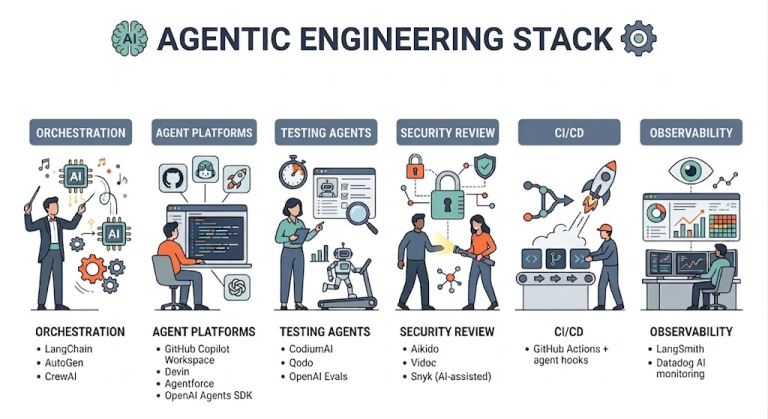

The Agentic Engineering Toolkit (2026)

Part 4: The Direct Comparison ⚔️

Part 5: A Tale of Two Engineers 👥

Same team. Same sprint. Same feature request: “Build a user authentication system.”

🎸 The Vibe Coder’s Week

Monday 9:03am. Opens Cursor. Types: “Add user login with JWT auth and password reset.” AI generates 340 lines across 6 files. Looks reasonable. Accept all.

Monday 9:47am. Manually tests in the browser. Login works. Password reset email arrives. Ships to production.

Monday feels great. Task closed in under an hour.

Three weeks later. A security researcher emails. Token validation was never checking expiry. The password reset link doesn’t invalidate after use. There’s no rate limiting on the login endpoint — brute-force attacks are trivially easy. The fix requires rewriting the auth layer. But the auth layer is entangled with session management, which is entangled with the user model, which was also AI-generated and barely understood.

The fix takes a week. And leaves scars.

🤖 The Agentic Engineer’s Week

Monday 9:03am. Defines requirements: OWASP auth compliance, refresh token rotation, rate limiting, 90% test coverage, no hardcoded secrets.

Sets up an agent pipeline: Planner → Coder → Test → Security Review → Human sign-off gate.

Monday 2:00pm. Planner Agent has decomposed the task into 11 subtasks with dependencies mapped. Coder Agent has implemented 8 of them.

Tuesday morning. Test Agent reports 94% coverage. Security Review Agent flags one issue: the password reset token TTL was set to 24 hours instead of the specified 1 hour. Rate limiting is confirmed. Token expiry is validated.

Tuesday afternoon. Human engineer reviews the security agent’s report, approves. Ships to production.

Three weeks later. System handles a 10x traffic spike. Zero security incidents. A new engineer joins and can actually read the code.

The authentication system was the same size. The difference was the discipline around the AI, not the AI itself.

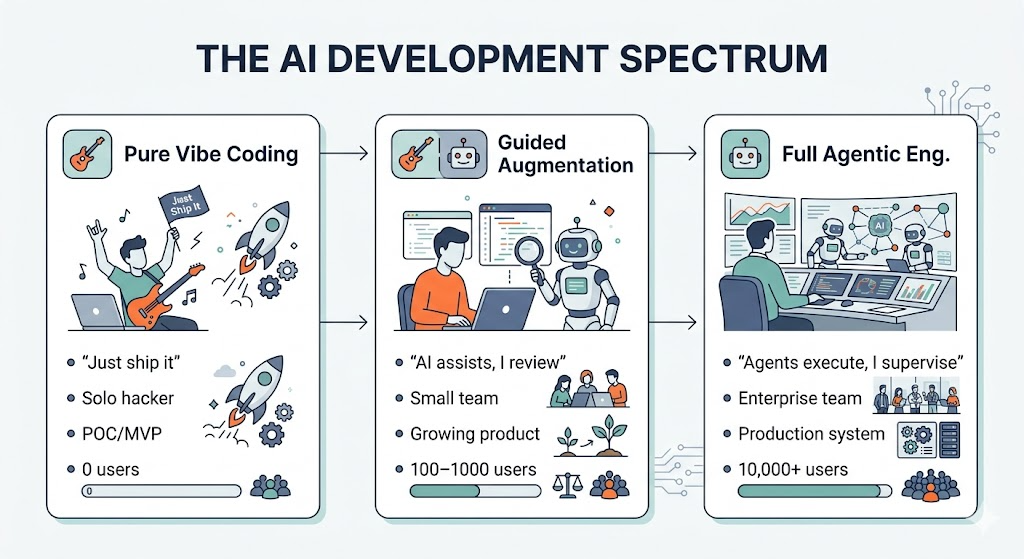

Part 6: The Spectrum — It's Not Binary 🌈

Here’s the nuance that often gets lost in the debate: this isn’t an either/or choice. It’s a spectrum, and the best teams in 2026 move along it deliberately.

The most dangerous place to be? Acting like you’re on the left side when you’re actually on the right.

The moment real users, real data, or real money enters the picture — you need to shift right.

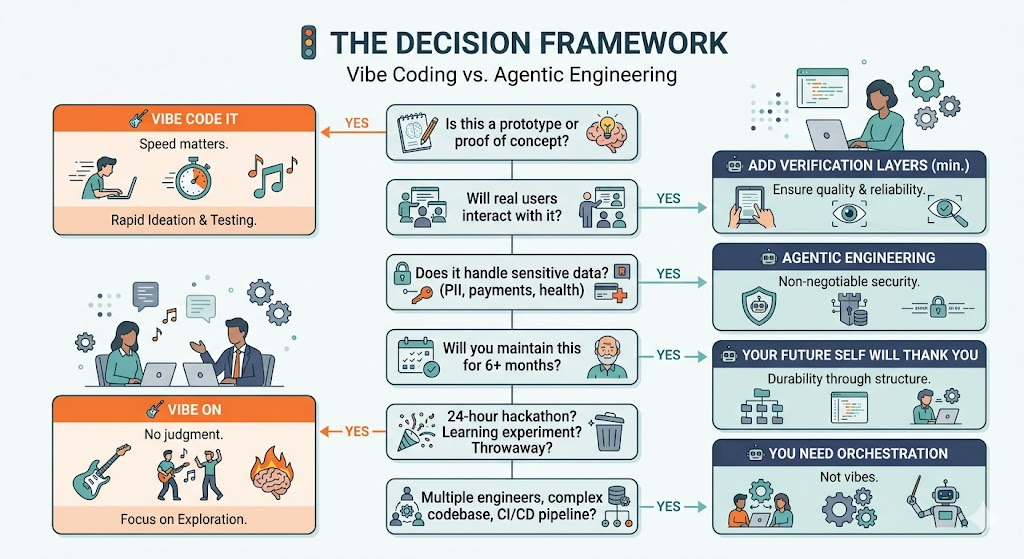

Part 7: When to Use Which 🚦

Use this as a mental checklist before you start:

Part 8: The Future of Both 🔭

By end of 2026, Gartner predicts that 40% of enterprise business applications will rely on agentic AI for workflow orchestration — up from under 5% in 2025. That’s not a trend. That’s a transformation.

But vibe coding isn’t going away either. It’s evolving. The next generation of tools is closing the gap — bringing lightweight verification, one-click security scanning, and automated test generation into the “vibe” workflow itself. The line between the two paradigms is blurring.

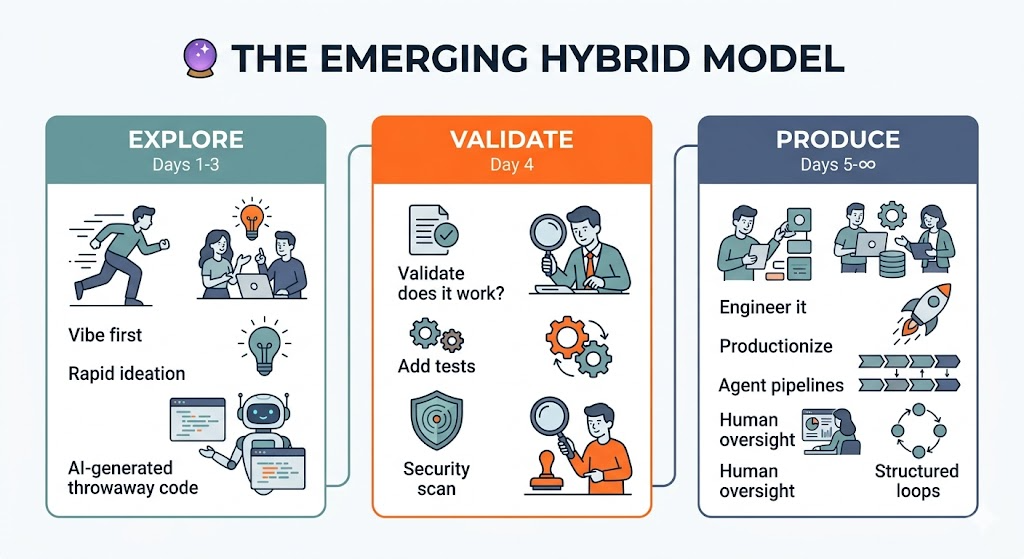

What’s emerging is something like this:

Vibe first, engineer second. Use vibe coding to explore the problem space quickly. Once you have something worth keeping, migrate it into a properly structured, agent-orchestrated pipeline.

The developers who will thrive in this era aren’t those who write the most code — or even prompt the most cleverly. They’re the ones who know which mode to be in for the problem they’re solving, and who can make the transition when the stakes change.

🎯 TL;DR

The question is no longer "can AI write this code?"

It's "am I the right kind of engineer to know what to do with it?"